IEEE SatML

Created on

July 29, 2024

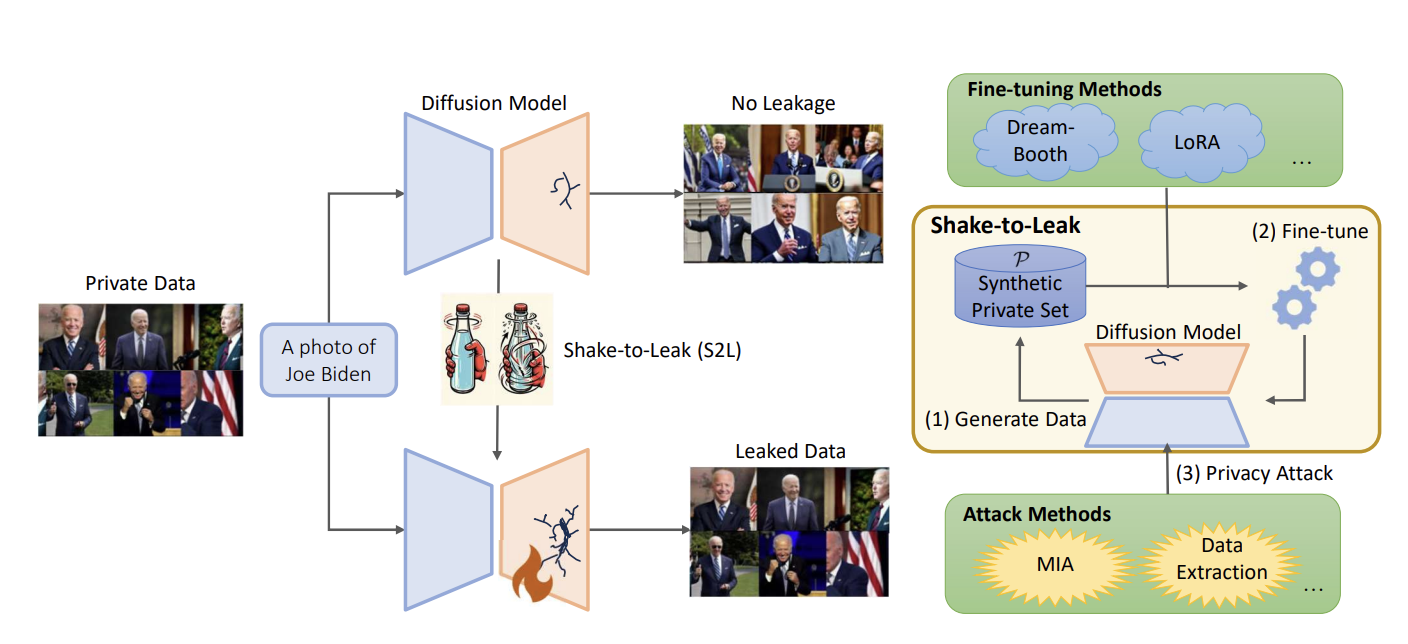

Shake to Leak: Amplifying the Generative Privacy Risk through Fine-tuning

Abstract

While diffusion models have recently demonstrated remarkable progress in generating realistic images, privacy risks also arise: published models or APIs could generate training images and thus leak privacy-sensitive training information. In this paper, we reveal a new risk, Shake-to-Leak (S2L), that fine-tuning the pre-trained models with manipulated data can amplify the existing privacy risks. We demonstrate that S2L could occur in various standard fine-tuning strategies for diffusion models, including concept-injection methods (DreamBooth and Textual Inversion) and parameter-efficient methods (LoRA and Hypernetwork), as well as their combinations. In the worst case, S2L can amplify the state-of-the-art membership inference attack (MIA) on diffusion models by 5.4% (absolute difference) AUC and can increase extracted private samples from almost 0 samples to 15.8 samples on average per target domain. This discovery underscores that the privacy risk with diffusion models is even more severe than previously recognized.

Author

Zhangheng Li, Junyuan Hong, Bo Li, Zhangyang Wang. (IEEE SatML 2024)