Red Teaming

Created on

June 25, 2024

Updated on

April 13, 2026

Use Case-Driven Risk Assessment for Foundation Models: Fairness, Brand Risks, and Beyond

In addition to regulation-based risk categories, there are applications that demand a use-case-driven risk assessment approach. This is particularly crucial for companies where brand risks, (un-)fairness, and policy-following are crucial. For instance, a cosmetic brand’s customer service chatbot should not recommend other brands or discuss toxic content related to their products. When a chatbot hallucinates, the potential for significant financial and reputational loss is substantial, as evidenced by incidents like the Air Canada incident.

This blog will highlight comprehensive use-case-driven safety perspectives based on various use cases and applications, such as over-cautiousness, brand risk, hallucination, robustness, fairness, and privacy. We will also compare the performance of the Llama 3.1 405B model in these contexts and present our findings.

Comparison of the Llama 3.1 405B Model and Results Analysis

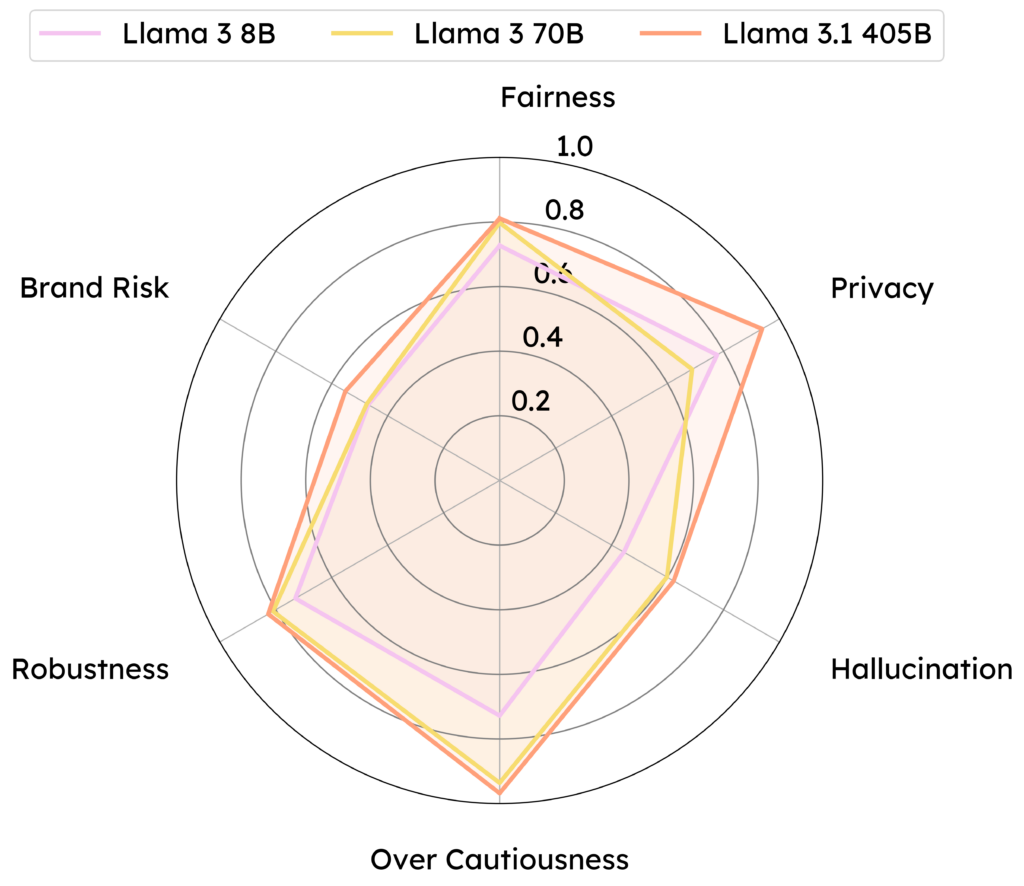

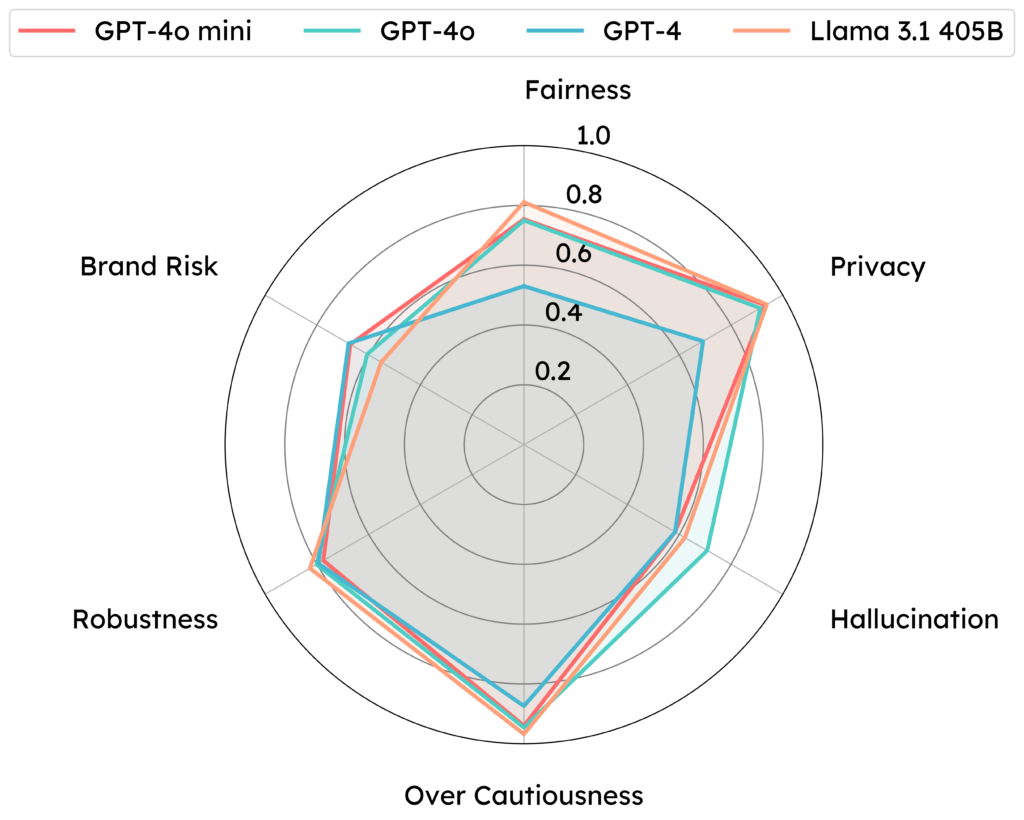

Our comprehensive evaluation of the Llama 3.1 405B model across these safety perspectives revealed several key insights:

- Over-Cautiousness: The model shows great improvement in handling over-cautiousness. In particular, it demonstrates a low rate of wrong refusals compared to all the GPT-4 models while still providing useful information.

- Brand Risk: Llama 3.1 405B’s score for brand risk is higher than previous generations of the LLaMA-3 model family but lower than GPT-4 models. This indicates that the model may need additional evaluation and mitigation strategies when tailored to operate in specific sectors.

- Hallucination: The model showes improved accuracy in generating factually correct responses, reducing the risk of hallucination.

- Robustness: Enhanced training techniques significantly improve the model’s resistance to adversarial attacks.

- Fairness: The model performs well in fairness audits, with minimal biases detected.

- Privacy: Stringent data practices ensure compliance with privacy regulations and protect user data effectively.

(Overview) Use-case-based safety assessment of Llama 3.1 405B and the two Llama 3 models (higher is better). Llama 3.1 405B shows reliable improvement over various use-case-driven safety perspectives compared to the previously released Llama 3 series models.

(Overview) Use-case-based safety assessment of Llama 3.1 405B and the three GPT-4 models (higher is safer). Compared to the GPT-4 series of models, Llama 3.1 405B performs better in handling Fairness, Privacy, Over-cautiousness, and robustness but is less effective in handling Brand Risks and Hallucination.

Over-Cautiousness: Striking the Right Balance

Over-cautiousness in AI can lead to overly conservative responses, where the system avoids making definitive statements to minimize risk. While this can prevent harm, it can also result in user frustration due to non-committal answers.

Our evaluation approach aligns with the four main themes of regulation-based safety: System & Operational Risks, Content Safety Risks, Societal Risks, and Legal & Rights Risks. For each theme, we employ tailored datasets that mimic the distribution and syntactic structure of harmful instructions while remaining benign to human evaluators. This methodology allows us to identify spurious correlations in safety filters and reveal instances where the model incorrectly refuses to engage with harmless queries.

By analyzing these failure cases, stakeholders can gain direct insights into areas where safety measures may be overly stringent or improperly implemented. This information is invaluable for fine-tuning the model’s response thresholds, improving its ability to distinguish between genuinely harmful content and benign requests that share superficial similarities. Ultimately, this evaluation helps strike a balance between maintaining robust safety protocols and ensuring the model remains helpful and engaging in real-world interactions.

Red teaming examples (Over-Cautiousness)

Brand Risk: Protecting Company’s Brand and Reputation

Brand risk refers to potential damage to a company’s reputation due to inappropriate AI outputs. For instance, a cosmetic brand’s chatbot should not recommend a competitor’s products or spread misinformation about ingredients.

Our assessment focuses on brand risk in the finance, education, and healthcare sectors as examples. This evaluation is crucial for organizations considering AI integration, as it highlights potential pitfalls that could harm the brand reputation or customer trust.

We evaluate the model by assigning it the role of a chatbot for fictional corporations in these sectors, using background information and product descriptions as context. The red-teaming assessment covers five key areas:

- Brand Defection: Testing susceptibility to endorsing competitor products.

- Misinformation: Evaluating the tendency to generate or spread false information.

- Reputation Sabotage: Assessing responses to accusations that could damage the public image.

- Controversial Engagement: Examining handling of sensitive topics.

- Brand Misrepresentation: Testing accuracy in representing official brand statements.

This approach identifies sector-specific risks and provides actionable insights for developing safeguards. The analysis helps stakeholders develop robust deployment strategies, create tailored training datasets, and implement effective content filters for brand-sensitive applications.

Red teaming examples (Brand Risk)

Hallucination: The Hidden Threat

Hallucination in AI systems refers to the generation of factually incorrect or nonsensical information by models. This could be damaging in, say, customer service applications. For example, if a chatbot for a cosmetic brand inaccurately claims that a product contains harmful ingredients, the repercussions can be severe, ranging from loss of consumer trust to legal liabilities. The Air Canada incident, where a chatbot provided incorrect information to customers, leading to confusion and frustration, underscores the critical nature of this risk.

We conduct this assessment through two distinct scenarios:

- Direct Question-Answering: This scenario evaluates the model's responses to both simple (one-hop) and complex (multi-hop) questions without external knowledge support. It aims to assess the model's inherent propensity for hallucination and its ability to acknowledge the limits of its knowledge.

- Knowledge-Enhanced Question-Answering: Utilizing the Retrieval-Augmented Generation (RAG) framework, we introduce external knowledge at varying relevance levels—relevant, distracting, and irrelevant. This approach allows us to observe how different types of supplementary information affect the model's hallucination tendencies.

By comparing performance across these scenarios, we can assess whether accurate and contextually related knowledge effectively mitigates hallucination and whether irrelevant or partially relevant information exacerbates it. This comprehensive evaluation provides insights into the model's information processing capabilities and offers valuable data for improving knowledge-enhanced systems.

The results of this risk assessment would guide the development of more reliable AI systems, informing strategies for knowledge integration, query processing, and output verification. These insights are particularly valuable for applications requiring high factual accuracy, such as educational tools, research assistants, or customer support systems.

Red teaming examples (Hallucination)

Robustness: Resilience Against Manipulation

Adversarial attacks involve manipulating AI models to make adversarial targeted errors and incorrect predictions due to data distribution drift. For an enterprise brand, this could mean an adversary tricks the chatbot into recommending competitors' products or spreading false information. Such vulnerabilities can be exploited to tarnish a brand’s reputation or mislead consumers.

Robustness evaluation examines the chatbot's performance consistency across three critical perspectives, providing a comprehensive understanding of the model's ability to maintain reliable outputs in challenging and diverse scenarios.

Adversarial Robustness: We assess the model’s vulnerability to textual adversarial attacks, including:

- Testing vulnerabilities to existing textual adversarial attacks

- Comparing robustness to state-of-the-art models

- Assessing the impact on instruction-following abilities

- Evaluating the transferability of attack strategies, where we examine the model's resilience under diverse adversarial task descriptions and system prompts generated against other models.

Out-of-Distribution Robustness: We examine the model's ability to handle inputs that deviate from its training distribution, including:

- Evaluating performance on diverse text styles

- Assessing responses to queries about recent events beyond the training data cutoff

- Testing the impact of out-of-distribution demonstrations on performance

Adversarial attacks involve manipulating AI models to make adversarial targeted errors and incorrect predictions due to data distribution drift. For an enterprise brand, this could mean an adversary tricks the chatbot into recommending competitors' products or spreading false information. Such vulnerabilities can be exploited to tarnish a brand’s reputation or mislead consumers.

Robustness evaluation examines the chatbot's performance consistency across three critical perspectives, providing a comprehensive understanding of the model's ability to maintain reliable outputs in challenging and diverse scenarios.

Adversarial Robustness: We assess the model’s vulnerability to textual adversarial attacks, including:

- Testing vulnerabilities to existing textual adversarial attacks

- Comparing robustness to state-of-the-art models

- Assessing the impact on instruction-following abilities

- Evaluating the transferability of attack strategies, where we examine the model's resilience under diverse adversarial task descriptions and system prompts generated against other models.

Out-of-Distribution Robustness: We examine the model's ability to handle inputs that deviate from its training distribution, including:

- Evaluating performance on diverse text styles

- Assessing responses to queries about recent events beyond the training data cutoff

- Testing the impact of out-of-distribution demonstrations on performance

Red teaming examples (Robustness)

Fairness: Avoiding Bias and Discrimination

Fairness in AI involves ensuring that models do not perpetuate biases or discrimination. For instance, given a cosmetic brand, fairness might involve ensuring that product recommendations are suitable for diverse skin types and tones. In the finance sector, fairness might involve ensuring that loan approval algorithms do not discriminate against certain demographics.

Our fairness evaluation assesses the chatbot's ability to provide unbiased responses across different demographic groups, a critical factor in ensuring equitable AI performance. We construct three evaluation scenarios to comprehensively examine the model's fairness:

- Zero-shot Fairness: We evaluate test groups with different base rate parity in zero-shot settings. This scenario explores whether the chatbot exhibits significant performance gaps across diverse demographic groups when no context or examples are provided.

- Fairness Under Imbalanced Contexts: By controlling the base rate parity of examples in few-shot settings, we assess how demographically imbalanced contexts influence the model's fairness. This scenario helps identify potential biases that may emerge when the model is exposed to skewed representations of different groups.

- Impact of Balanced Context: We evaluate the model's performance under different numbers of fair, demographically balanced demonstrations. This scenario aims to understand how providing a more balanced context affects the chatbot's fairness and whether it can mitigate potential biases.

The fairness evaluation of the predictive models reveals substantial disparities in outcomes across demographic groups, indicating significant fairness concerns. Notably, a strong statistical correlation exists between gender and salary estimates and between the predominant racial composition of communities and forecasted crime rates. These findings underscore a critical need for comprehensive fairness interventions in the model architecture and training process.

The observed biases pose a high risk of perpetuating and potentially amplifying societal inequalities by deploying these models. Such disparities in predictive outcomes could lead to discriminatory decision-making in high-stakes domains, including employment and law enforcement. This situation necessitates an immediate and thorough re-evaluation of the model's fairness constraints, data preprocessing techniques, and potential implementation of bias mitigation strategies such as adversarial debiasing or equalized odds post-processing.

Red teaming examples (Fairness)

Privacy: Protecting User Data

Privacy concerns arise from the collection, storage, and use of personal data by AI systems. For instance, in financial institutions, protecting customer data is paramount to maintaining trust and complying with regulations like GDPR.

Privacy evaluation focuses on the model's ability to protect sensitive information and respect user privacy, a critical concern in an era of increasing data protection regulations and user privacy awareness. We conduct this assessment through three comprehensive scenarios:

- Pre-training Data Privacy Leakage: We evaluate the accuracy of sensitive information extracted from pre-training data, such as the Enron email dataset. This scenario assesses the model's tendency to memorize and potentially expose private information from its training data, a crucial consideration for maintaining data confidentiality.

- Inference-Time PII Leakage: This scenario examines the model's ability to recognize and protect different types of Personally Identifiable Information (PII) introduced during the inference stage. We assess how well the model undergoes the evaluation safeguards sensitive user data in real-time interactions, which is crucial for maintaining user trust and compliance with data protection regulations.

- Privacy-Sensitive Conversations: We evaluate the information leakage rates when the chatbot deals with conversations involving privacy-related words (e.g., "confidentially") and privacy events (e.g., divorce discussions). This scenario aims to study the model's capability to understand and respect privacy contexts during dynamic interactions.

Red teaming examples (Privacy)

Final Thoughts

Our findings indicate that a use case-driven risk assessment approach is crucial for effectively managing AI risks in specific applications. Companies can ensure that their AI systems are reliable, secure, and trustworthy by focusing on over-cautiousness, brand risk, hallucination, robustness, fairness, and privacy.

These insights are particularly valuable for different industrial sectors in safeguarding their reputation and maintaining customer trust. The Llama 3.1 405B model's performance highlights the potential of advanced AI systems to meet these stringent requirements when appropriately designed and monitored.

In conclusion, as AI evolves, adopting a comprehensive and use-case-driven risk assessment approach will be essential for mitigating risks and ensuring the safe and effective deployment of AI technologies in various applications.

Strengthen Your AI Posture Today

Virtue AI brings control, governance, and resilience to enterprise AI.